|

1/2/2024 0 Comments Caret random forest

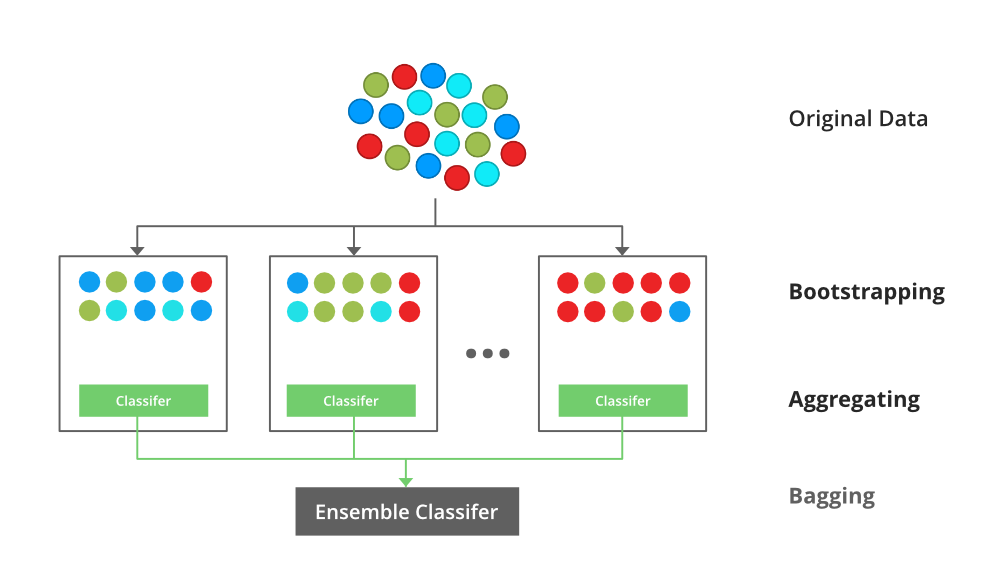

Since the boostrap samples used to train each individual tree come from the same data set, it is not surprising that the trees may share some similar structure. It should be noted that although the bagged trees are identically distributed, they are not necessarily independent. It is usually pointless to construct an ensemble with more than 50 bootstrap samples, since the marginal performance gain is not worth the extra computational costs and memory requirements. For some models with high variance (e.g., decision trees), performance gain is apparent up to about 20 bootstrap samples, and then tails off with very limited improvement beyond that point. However, the most performance gain is typically achieved with the first 10 bootstrap samples. Generally speaking, the bigger the number of trees, the less the variance and hence the better performance of the ensemble. The number of trees (i.e., \(k\)) is a hyperparameter for bagged trees. Since decision trees are noisy but have relatively low bias, they can benefit a lot from bagging. Therefore, bagging is especially effective for models with low bias but high variance. The mechanism in which bagging improves model performance is reducing variance 1 (see my other post that explains the bias-variance tradeoff). Since the models in an ensemble are identically distributed (i.e., i.d.), the expectation of the average of the \(k\) predictions is the same as that of any individual prediction, meaning that the bias of the ensemble is the same as that of a single tree. For a new observation, each model provides a prediction and the \(k\) predictions are then averaged to give the final prediction.Train the model on each of the samples.Generate \(k\) bootstrap samples of the original data.The process of bagging a model is very simple: Bootstrap aggregation (i.e., bagging) is a general technique that combines bootstraping and any regression/classification algorithm to construct an ensemble.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed